Installing CUDA TK 8 and Tensorflow on a Clean Ubuntu 16.04 Install

Last updated:

- Download the driver

- Download the CUDA Toolkit

- Install the driver you downloaded on the first step

- Install the CUDA toolkit

- Test the installation

- Install cuDNN

- Install native linear algebra libraries

- Install python stuff

- Install Tensorflow 1.0 into a virtualenv

- Uninstalling all nvidia drivers

- Unable to load nvidia-drm kernel module

- The distribution-provided pre-install script failed! Are you sure you want to continue?

- /sbin/ldconfig.real: /usr/local/cuda/lib64/libcudnn.so.5 is not a symbolic link

- ImportError: libcudnn.so.5: cannot open shared object file: No such file or directory

Sister Blog post: Setup Keras, Theano Backend on Ubuntu 16.04 (this is a similar post aimed at Keras+Theano instead)

This is a small tutorial to guide you through installing Tensorflow with GPU enabled, on top of the CUDA + cuDNN frameworks by NVIDIA.

If you want more information about how to install Ubuntu 16.04 on a Dell Notebook (should work for other vendors too), look at Troubleshooting Ubuntu 16.04 Installation/Graphics card on a new Dell Notebook.

Download the driver

Download the .run installation file for NVIDIA version 375 from The NVIDIA downloads page

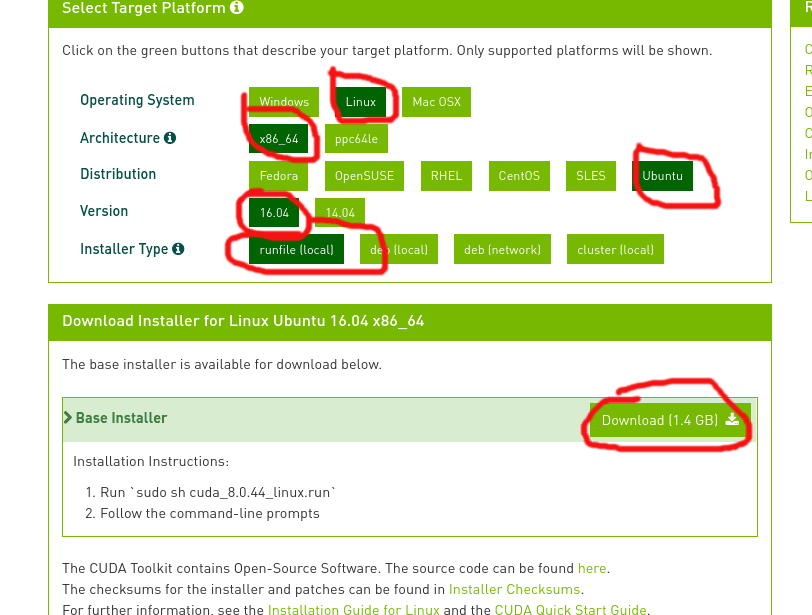

Download the CUDA Toolkit

Again, download the .run installation file for the 8.0 version of the CUDA Toolkit: NVIDIA - CUDA Downloads

download the .run file. NOT THE DEB FILE!!

download the .run file. NOT THE DEB FILE!!

Install the driver you downloaded on the first step

Before running the .run file, you must shut down X:

$ sudo service lightdm stopAfter shutting down X, hit

Ctrl+Alt+F1(orF2,F3and so on) and log in again.Go to the directory where the

.runfile was downloaded and execute the downloaded .run file:$ sudo chmod +x NVIDIA-Linux-x86_64-375.26.run $ sudo ./NVIDIA-Linux-x86_64-375.26.run

Install the CUDA toolkit

Go to the directory where the .run file was downloaded and run the following command to run the installer but do not install the bundles drivers! when asked to do so!

$ sudo chmod +x cuda_8.0.44_linux.run $ sudo sh cuda_8.0.44_linux.runConfigure the LD path:

- Create a file called

/etc/ld.so.conf/cuda-8-0.confwith the following contents:

/usr/local/cuda/lib- Run

$ sudo ldconfig

- Create a file called

Test the installation

Go to the directory where the CUDA samples were installed and compile the programs:

cd $ /path/to/NVIDIA_CUDA-8.0_Samples/ $ makerun

deviceQueryunder the newly-createdbin/directory and check the output (some lines ommited):felipe@felipe-Inspiron-7559:~/NVIDIA_CUDA-8.0_Samples/bin/x86_64/linux/release$ ./deviceQuery ./deviceQuery Starting... CUDA Device Query (Runtime API) version (CUDART static linking) Detected 1 CUDA Capable device(s) Device 0: "GeForce GTX 960M" CUDA Driver Version / Runtime Version 8.0 / 8.0 CUDA Capability Major/Minor version number: 5.0 Total amount of global memory: 4044 MBytes (4240375808 bytes) ( 5) Multiprocessors, (128) CUDA Cores/MP: 640 CUDA Cores GPU Max Clock rate: 1176 MHz (1.18 GHz)

Install cuDNN

Download and Install the cuDNN framework to proceed.

As of now (April 2017) the Tensorflow docs suggest you install version 5.1 of this library.

Extract the downloaded

.tgzand copy the files to the CUDA directory:$ sudo tar -xzvf cudnn-8.0-linux-x64-v5.1.tgz $ sudo cp cuda/include/cudnn.h /usr/local/cuda/include $ sudo cp cuda/lib64/libcudnn* /usr/local/cuda/lib64 $ sudo chmod a+r /usr/local/cuda/include/cudnn.h /usr/local/cuda/lib64/libcudnn*Add this line at the end of

~/.bashrcexport CUDA_HOME=/usr/local/cudaLoad the file:

$ source ~/.bashrcEdit file

/etc/ld.so.conf.d/cuda-8-0.conflike this:/usr/local/cuda/lib64 /usr/local/cuda/extras/CUPTI/lib64Run

sudo ldconfig

Install native linear algebra libraries

$ sudo apt-get install libopenblas-dev liblapack-dev gfortran

Install python stuff

$ sudo apt-get install git python-dev python3-dev python-numpy python3-numpy build-essential python-pip python3-pip python-virtualenv swig python-wheel libcurl3-dev

Install Tensorflow 1.0 into a virtualenv

create a python 3 virtualenv and activate it

$ virtualenv --system-site-packages -p python3 tf-venv3 $ source tf-venv3/bin/activateupdate pip itself

(tf-venv)$ pip install --upgrade pipInstall tensorflow (as per the docs)

(tf-venv3)$ pip install --upgrade tensorflow-gpuTest your tensorflow installation

In your virtualenv, open a python session and type

import tensorflow as tf.If all went well, you should see no error messages when you import the library:

Python 3.5.2 (default, Apr 20 2017, 17:05:23) [GCC 5.4.0 20160609] on linux Type "help", "copyright", "credits" or "license" for more information. >>> import tensorflow as tf >>>

Troubleshooting / Unistalling

Uninstalling all nvidia drivers

If you've installed the driver via the .run file, do this:

$ sudo nvidia-uninstall

Unable to load nvidia-drm kernel module

Disable Secure Boot (in the BIOS settings) then try again.

The distribution-provided pre-install script failed! Are you sure you want to continue?

This is not an error message per se. Just select continue installation

/sbin/ldconfig.real: /usr/local/cuda/lib64/libcudnn.so.5 is not a symbolic link

This may happen when you run sudo ldconfig after copying cuDNN files.

After installing cuDNN, copying the extracted files to /usr/lib/cuda/lib64 and creating the symlinks, things may go wrong with the symlinks.

So go to /usr/local/cuda/lib64/ and run ls -lha libcudnn*.

You should see two symlinks (bold teal) and one single file. Something like this:

The exact version of libcudnn.so.5.1.5 maybe be a little different for you (maybe libcudnn.so.5.1.10). In that case, adapt the code accordingly

/usr/local/cuda/lib64$ ls -lha libcudnn*

lrwxrwxrwx 1 root root 13 Dez 25 23:56 libcudnn.so -> libcudnn.so.5

lrwxrwxrwx 1 root root 17 Dez 25 23:55 libcudnn.so.5 -> libcudnn.so.5.1.5

-rwxr-xr-x 1 root root 76M Dez 25 23:27 libcudnn.so.5.1.5

If libcudnn.so and libcudnn.so.5 are not symlinks then this is the reason why you got this error. If so, this is what you need to do:

/usr/local/cuda/lib64$ sudo rm libcudnn.so

/usr/local/cuda/lib64$ sudo rm libcudnn.so.5

/usr/local/cuda/lib64$ sudo ln libcudnn.so.5.1.5 libcudnn.so.5

/usr/local/cuda/lib64$ sudo ln libcudnn.so.5 libcudnn.so

Run sudo ldconfig again and there should be no errors

ImportError: libcudnn.so.5: cannot open shared object file: No such file or directory

See the item above. The fix is the same.