Preventing Bad Data from Entering your App Makes your Life Easier!

Last updated:The world of code and software has well defined rules and few exceptions.

When, however, we use code to write applications and information systems, we're venturing into the world of humans (who are, after all, the ones who derive value from our applications), which has few, ill-defined rules and a lot of exceptions.

The software world needs fixed rules and few exceptions. The human world, whose interactions define what code needs to be written, is infinitely malleable and any rules have many exceptions.

If you've ever tried to model the day-to-day operations of a modern company into a computer system that's supposed to automate and optimize those processes, you know that this is neither easy nor clean. It's decidedly unpredictable and messy.

Process modeling is a world in and of itself. I'll not dwell too much on the ins and outs of process modelling; I'll focus on practical advice to make your experience in software development run smoother.

Suppose you have modeled everything you need and your system has gone into production. People start using it: creating, reading, updating and deleting data (this is what the CRUD acronym stands for).

But every now and then (or quite frequently if your system is used by a lot of people), strange errors start popping up: users start using your system in ways you hadn't thought of, funny data appears in the database and a decent amount of your time is spent correcting broken functionality rather than implementing new features.

Every now and then, bad data causes errors at runtime which force you to work reactively (fixing bugs) rather than proactively (think about and implementing new features).

Lately I've spotted a recurring pattern when I need to fix something or find out why something that used to work now doesn't. It's generally due to bad data in the database.

And the fix to bad data problems is nearly always implementing more data validation when users enter data into your system.

What is meant here by bad data?

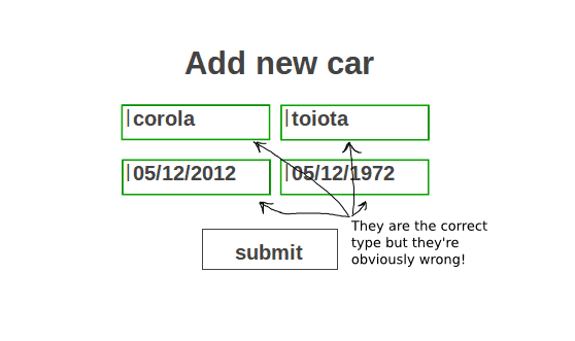

It's data that's maybe syntactically correct (i.e. there's a date where your system expects a date, or an integer where your system expects an integer) but semantically wrong.

In other words, data that is generally correct, but not for your system.

- Example: Car Inventory System

Suppose you have written a car inventory system for a car dealership in your neighbourhood to keep track of the cars they have in stock.

Such a system would have a Carentity, which would probably have attributes like manufacture_date (the date the car was manufactured) and entry_date (the date the car arrived at the dealership to be sold).

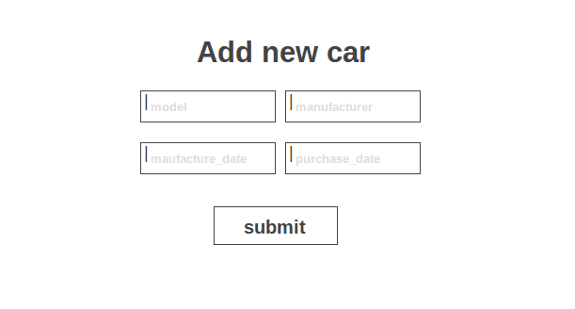

This system would probably have a view called Insert new Car that will prompt user to enter a new car into the system:

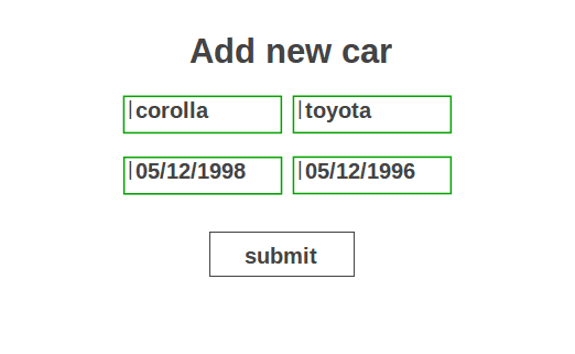

Now you have correctly added validators to validate that users only enter valid dates in each of the date inputs and valid strings (not numbers) in each of the text inputs:

Note that your type validation helps but it is nowhere near enough. Note what could happen when users start using your system:

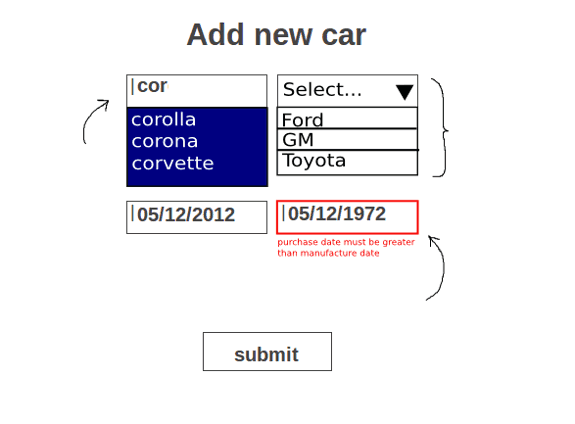

There's much you can do here; your Web Framework probably provides some validators for your out of the box:

Note some changes:

- Autocomplete inputs instead of regular text inputs: this helps prevent spelling mistakes as well as slight variations in names (which cause problems in databases).

- Drop Down lists instead of free-text inputs: this helps avoid spelling mistakes and forces users to choose from a set of preset (unmistakably valid) values.

- Semantic Validators that don't just validate whether data is the right type, but validate that data is semantically correct given you system's context. In the example, both dates are valid but it doesn't make sense to input a

purchase_datethat is prior to the car'smanufacture_date! (A car must be manufactured before it is sold!)

Smite these inconsistencies before they enter your system and grow into something worse! Think runtime errors!

Always Remember

Don't give any more freedom to users than they absolutely need. Given the slightest opportunity, users will make mistakes and (mostly unwittingly) introduce bad data into your systems!

Bad data will cause you headaches in the worst possible moments.

So validate data as much as you can before it enters your system!

Afterthought

Think of data validation in web applications like application-level asserts in your code.

I confess I used to be prejudiced against using asserts in my code (they're not very elegant) but I catch myself using them every now and then, in particular when I'm coding something mathy or otherwise related to complex calculations.

As someone whose opinion I respect told me, they're useful for documentation purposes, as they make make clear what expectations you have whenever they appear, helping anyone who may need to study or verify/revise your code later on (or even yourself in a couple of months).

That's aside from the obvious advantage of having code break earlier rather than later (you spot inconsistencies before they cause a runtime error and blow up in the face of your users) - which is an all-around good practice and hard to argument against.